Check out our latest blog article: From component to enterprise – modular robotics done right.

Augmented Reality Manual for Troubleshooting Dishwashers (iOS)

How to attach an AR object to a physical object that is not suitable for ARKit detection

A European consumer electronics brand that manufactures a wide range of devices for in-home use. As part of its Digital Transformation activities, the company is looking for a technology solution to present user manuals in a simple and engaging manner.

Project Information

T&M (Time and Materials)

Kanban

iOS Developers

Project Manager

Deep Learning Developer

3D Designer

UI/UX Designer

QA Engineer

Problem

The company addressed Softeq to create an Augmented Reality app for iOS devices, which would allow customers to point their smartphones at a dishwashing machine and receive visual instructions overlaid on their camera feed in real time.

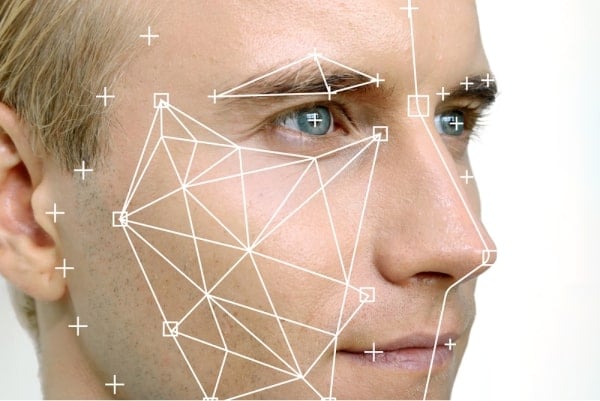

Augmented Reality renders graphical information on a three-dimensional scene. The technical challenge here is “attach” superimposed imagery to a physical object in the real world. This is made more difficult by the fact that AR development tools may fail to detect physical objects with a low-texture, glossy, or transparent surface. Environmental factors, such as light intensity, can also affect object detection accuracy.

Softeq suggested building a mobile app prototype with ARKit to test the feasibility of the concept and devise a technical vision for future implementations. The prototype would identify several models of dishwashing machines from our client’s catalog and support the following use cases:

- Provide dishwasher loading tips

- Instruct users how to change a cycle after the dishwasher is started

- Help users clean the dishwashing machine

Technology-wise, the application was to meet the following performance requirements:

- Detect a dishwashing machine

- Attach AR objects to an identified dishwashing machine

- Enable users to view the AR objects from different angles and distances

Solution

Proof of Concept and Technology Stack Choice

When choosing the appropriate technology stack for the iOS app, our team had to address three challenges:

- Train the application to identify dishwashing machines in live video data obtained from a smartphone camera

- Calculate the dishwashing machine’s position in the real world as well as its angle of rotation

- Place AR objects on top of camera feed relative to the dishwashing machine front panel

We tested several ARKit configurations, including Object Detection and Image Recognition, to determine whether we could accomplish the tasks solely with the native iOS Augmented Reality tools. Eventually, the team decided to only use the ARKit World Tracking configuration to position AR objects in the physical space.

Additionally, we needed a 3rd-party library capable of calculating the distance and rotation of an object in the real world relative to the camera, and for this we chose OpenCV. To improve the framework’s pose estimation results, our iOS development team had to deactivate ARKit autofocus, perform extra calculations, and apply Kalman filtering to reduce the movement and distortion of virtual objects. We also implemented the iOS CoreML framework for real-time object detection, which would work in sync with a custom-trained neural network.

Deep Learning

The ultimate goal was to teach Deep Learning algorithms to detect a dishwashing machine in live video data, identify its type, and deliver relevant troubleshooting tips. For that purpose, we set up a Google Cloud server and trained a MobileNetV2-SSD model using TensorFlow.

To train the neural network model, we photographed the dishwashing machines from different angles and in different proximity zones and applied various augmentations to expand the dataset from 300 to 4500 images. The team also annotated the photographs using the Supervise.ly platform to improve the detection of certain parts of a dishwasher front panel where AR objects would be displayed.

Result

Softeq completed the project on time and in line with the customer’s requirements. The solution features a neural network that detects several types of dishwashing machines manufactured by our client, and an iOS mobile app prototype.

Here’s how the application works:

- After starting the app, a user chooses the type of action he or she wants to perform (load or clean the dishwasher, or change the cycle)

- The user proceeds to the camera view screen. The app detects the model of the dishwashing machine and places an AR object denoting Step 1 of the selected use case

- Text and image-based instructions appear on the screen in real time

- Alternatively, a user can be navigated to the Info Screen, which offers a step-by-step guide in a document-like manner (as opposed to CGI appearing on top of camera feed)

What’s Next?

Our client will study the demand for Augmented Reality manuals within the consumer electronics market. If the customer decides to proceed, Softeq will design a market-ready solution with a distinct monetization model. We can also scale the application so that it supports more home appliances and use cases. Expansion to the Android mobile platform is also possible with a different technology stack.