Check out our latest blog article: From component to enterprise – modular robotics done right.

Improving Vehicle Safety: Five Technological Pillars of ADAS (Part I)

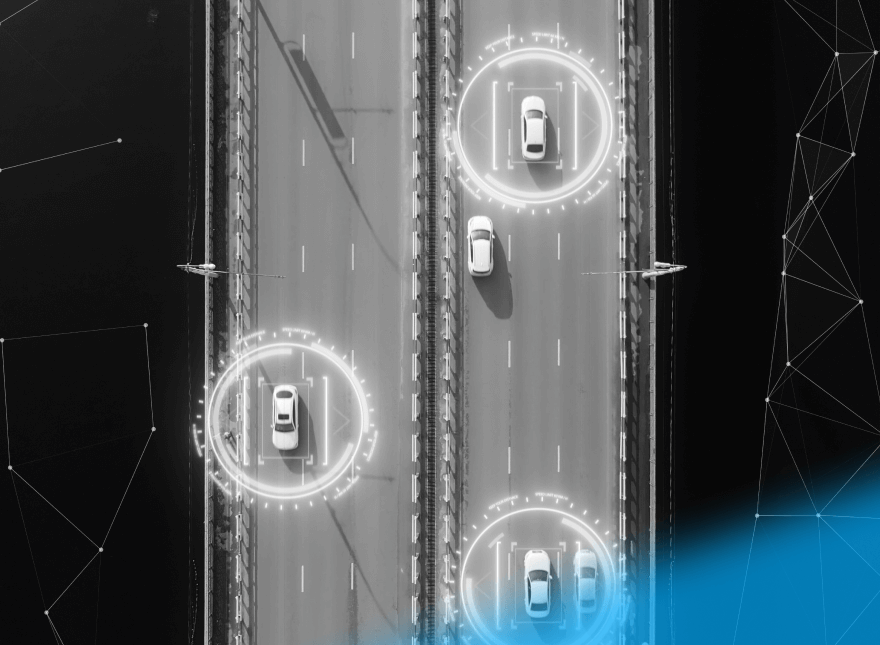

Advanced driver-assistance systems (ADAS technologies) are vehicle-based intelligent systems with a human-machine interface. They are designed to increase driver, passenger, and pedestrian safety by reducing vehicular accidents and fatalities.

In this article, we will zoom in on the fundamental pillars of ADAS car technologies and how they improve vehicle safety and offer comfortable driving. And here you can learn about ADAS use cases and their context.

Ensuring Connectivity: Technologies Supporting ADAS

Using innovative technologies can help significantly reduce the number of road-related accidents and improve road safety. In order to implement these new safety technologies, five key technology pillars are necessary:

- Sensors (to gather data and send it to the cloud for further processing)

- Processors (to process and make decisions with the help of ECU, CPU, GPU)

- Computer vision and image processing algorithms (to power life-saving systems)

- Mapping solutions (to ensure greater precision and the ability to learn and improve maps over time)

- Actuators (to help take prompt actions based on computed results)

.png?width=880&name=S1%20(2).png)

Let’s take a closer look at each of them.

Sensor Technologies

Sensors are the eyes and ears of ADAS technology systems. Automotive companies need them to make their products small, cost-efficient, and low-power. There are four common types of sensors: radar units, lidars, camera systems, and ultrasonic transmitters.

Radar units are typically used in ADAS to calculate distance. The units send a high-frequency radio signal. Once it detects an object, it returns to the sensor and the sensor calculates the distance between the vehicle and the object. This can help alert drivers to other cars, pedestrians, or obstructions in the road.

Lidars use pulsed lasers to measure various parameters in their path. They facilitate the mapping of the surroundings. A typical Lidar-based system of the autonomous car consists of a laser source, a scanning mechanism, GPS and inertial measurement unit, and computing power for processing data.

Cameras play an important role in monitoring a vehicle’s surroundings. Cameras provide a 360-degree view of a vehicle in its environment by using multiple lower-resolution cameras placed around the vehicle, all designed to work together.

Ultrasonic transmitters are used for blind-spot detection, self-parking, and parking assistance. Parking assist features typically use ultrasonic sensors in the front and rear bumpers to reflect high-frequency sound waves off of objects or people in close proximity to the vehicle.

Processors

To compute and process all data gathered from cameras, radars, and lidar sensors, cars require high-performance processors. Microprocessors are prevalent in modern vehicles—there might be as many as 100 of them, and the number is even growing. The reason for this growth of microprocessors is the need to:

- Implement new safety, convenience, and comfort features

- Reduce the amount of wiring in cars

- Simplify the manufacture and design of cars

- Control engine power to meet emissions and fuel economy standards

- Perform advanced car diagnostics

Computers make cars quicker, safer, cleaner, more efficient, and more reliable by using processors to control braking, cruise control, entertainment systems, and more. Software is closely integrated with hardware in each unit, but all units are also made to work with others.

Software Algorithms

Computer vision algorithms play a crucial role in the development of ADAS: they support life-saving systems in a car. These algorithms help deliver clear and accurate image data, so that the car’s systems understand it and respond accordingly.

Vision-based ADAS systems typically contain two main components—a camera module or embedded vision system, and ADAS algorithms. Embedded vision systems are equipped with image sensors, image processing algorithms, cables, and a lens module.

Computer vision systems are used for:

- Road sign detection

- Pedestrian detection

- Lane departure warning

- Vehicle detection

- Traffic lane detection

- Emergency braking systems

- Forward collision warning systems

- Automated parallel parking

- Speed change warnings

Mapping Solutions

Digital maps complement car sensors in autonomous cars. The ADAS mapping function stores and updates geographical and infrastructure information gathered via vehicle sensors to determine its exact location. This function maintains the data and communicates it to system control even if GPS coverage fails.

By using map data, the automated driving system can also anticipate the road ahead and create its precise picture. A car sees around corners and adapts its driving behavior in poor visibility conditions. Sensor fusion that combines inputs from different sensors with information from digital maps makes automated driving safer, as the system can handle more extreme circumstances and adds redundancy.

Actuators

The actuation system carries out vehicle motion requests (such as driving, braking, and steering) based on driver operation. The system consists of the following subsystems: powertrain, brake, and steering. ADAS actuators respond to the object recognition results, which are then processed into commands to control the vehicle through programmed sequences. They provide visual, acoustic, and haptic warnings to electric power steering, autonomous acceleration, and braking.

The Future is Driverless

The move to smart driving systems will bring many potential benefits: fewer accidents due to human error, a drop in the cost of vehicles, reduced environmental impact of transport, and safer and more accessible driving.

Achieving increased autonomy, however, brings a host of challenges for startups and OEMs. They must innovate quickly; master connectivity to deliver the V2X (vehicle-to-everything) capabilities; ensure their technology infrastructure remains stable, scalable, and reliable to process; and store and analyze huge amounts of data. If you need help solving those challenges and build a reliable ADAS solution, contact our engineering team.

More articles on the topic