Check out our latest blog article: From component to enterprise – modular robotics done right.

Edge Computing and AI: How Smart Algorithms Turn Raw Data into Instant Insights

Artificial Intelligence (AI) and the cloud-based IoT solutions have always walked hand in hand. The cloud provides resources for training complex IoT systems on datasets, while AI brings to life, perfects, and updates algorithms which streamline the autonomy of connected solutions.

Nonetheless, transmitting data to the cloud has stopped being a silver bullet solution in IoT software development. Uncontrolled latency, unstable network connections, and overall system unreliability are critical issues that can't be neglected. Bringing AI tasks closer to data sources, i.e. to the edge of a network, looks like a good solution to mitigate these risks. So good in fact, that Google, Apple, and Amazon are investing millions in edge computing to make their products immediately react to incoming requests

One of the most recent trends in IoT is building a decentralized network architecture using edge computing and keeping data processing as close as possible to where the data originates.

How do edge computing and AI work together? What are potential business use cases, and what benefits does this mixture bring? Last but not least: how can you get started with AI brought to the edge?

Why Edge AI Replaces the Cloud

A combination of edge computing and AI technologies, or Edge AI, allows developers to process data generated by devices locally or on the server near them by using AI algorithms. As a result, devices make decisions in a matter of milliseconds with no need to connect to the cloud or transfer data to other network edges.

In traditional AI settings, a model is trained on a suitable dataset to perform specific tasks. Step by step, the model learns to find similar patterns in other datasets with similar properties.

Once it works well enough in an unknown environment and performs as desired, the model is deployed and used in real-life cases, where it makes predictions, communicates them to other software components, or visuzalises them on a front-end interface for end users.

The cloud allows developers to leverage Artificial Intelligence technologies at scale. Training a model requires vast computational and data storage resources, and the cloud becomes a natural solution. The cloud has pushed AI into the mainstream but now it is posing great challenges to the technology’s further development.

In the Industry 4.0 context, the need for real-time computing is growing fast—and the cloud is failing to satisfy it.

The very idea of the cloud is becoming incompatible with the ever changing digital environment. The required data is first transferred to the cloud, where it is processed in accordance with the preset model, and only then the output is communicated back to the end device. A lot of potential challenges are emerging during the process:

- A reliable connection. What if a data transfer is slow or even impossible?

- Latency. While the data is transferred back and forth, mission-critical applications are failing to cope with upcoming issues

- Security and privacy concerns. Every attack at sensitive health, financial, personal, and other data sent to the cloud and stored there can become fatal for a business which needs to keep in mind an ever growing number of security risks associated with the cloud

- High bandwidth costs. Processing large amounts of data in the cloud is pretty expensive, as you need to purchase a lot of capacity from a cloud service, like GPU computing. Extra bandwidth needed for fast response times is costly and requires significant investments

And that is not even the full list of concerns that come to mind for specialists dealing with AI technologies and cloud infrastructure.

“Cost, performance, workload management, and data access. These are all challenges that data scientists face as they do their work in the cloud.”

Bret Greenstein, Vice President and Global Head of Artificial Intelligence at Cognizant

Now imagine a smart factory where specialists use connected hand-held tools for everyday operations. The devices collect, proceed, and analyze data in real-time, providing the necessary information when needed and sending immediate notifications in emergencies. At the end of a working day, they transfer data to the cloud for deeper analysis and other activities, like predictive maintenance or further product development.

This is made possible because data processing is done locally at the edge: a consolidated dataset is used for training a model, which learns to make decisions, actuate, and do other things on its own. AI-based techniques empower automated complex decisions directly at the edge for faster, more private, and context-aware applications.

How Edge AI Works Under the Hood

“Edge AI happens when AI techniques are embedded in Internet of Things ( IoT) endpoints, gateways, and other devices at the point of use.”

Jason Mann, Vice President of IoT at SAS

The surge of edge devices—sensors, mobile phones, edge nodes—demands a shift from the network core, i.e., cloud data centers, to the network edge to process huge volumes of data in proximity.

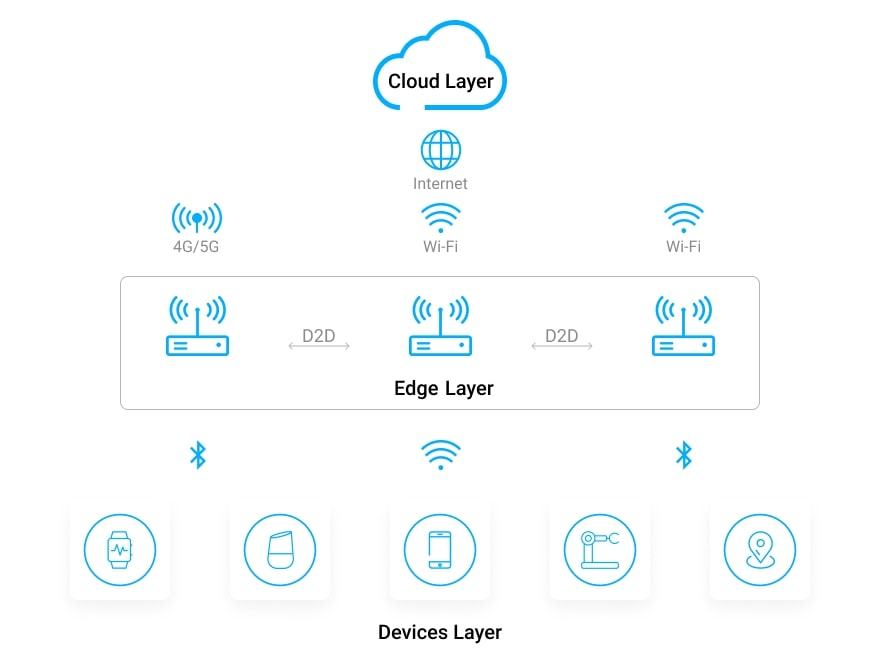

In an edge computing architecture, data processing is accomplished on devices (end nodes) and at the gateways (edge nodes).

Edge AI is typically deployed in a hybrid mode, which combines the decentralized “at the edge” training mode with a centralized revised mode, where algorithms are revisited and updated.

- The nodes at the upper level act as simple collectors of data generated by sensors and aggregators. Later this data is used for training

- Machine Learning algorithms are run locally on end nodes and embedded systems as opposed to remote cloud-based servers. For example, wake words or phrases, like “Alexa” or “OK Google”, are simple Machine Learning models stored locally on the speaker. Whenever the smart speaker hears the wake word/phrase, it will begin “listening”, i.e., streaming audio data to a remote server where it can process the full request

- Model updates are sent back to the cloud, which aggregates them and sends the perfected model back to the end devices

In an architecture where AI algorithms are combined with edge computing, we achieve decreased transmission latency, increased data privacy, and bandwidth cost reduction. However, this comes at the cost of extended computational latency and energy consumption.

Hence, the balance between the amount of AI computing done at the edge and in the cloud is flexible and application-dependent. When choosing the level of edge intelligence, one has to consider multiple factors: latency, energy efficiency, privacy, and bandwidth cost.

Use Case 1: Manufacturing

Factory workers have to deal with myriad manufacturing processes in real time: fine-tuning equipment movements, temperature reduction, vibration control, and so on. In these conditions, latency must be kept to a minimum, and Edge AI helps bring data processing closer to the manufacturing plant and improve overall equipment effectiveness (OEE).

Edge AI allows operators to train the device in the actual environment and under the same conditions where it will operate: line speed, job style, ambient temperature and humidity, and so on.

Daihen Corp., a Japanese industrial electronics company, provides a good example of effective Edge AI implementation. The company wanted to analyze the data provided by dozens of devices measuring material conditions and reduce the need for manual monitoring. By applying Machine Learning models on their highly constrained devices, the company managed to automate monitoring and control of the whole industrial transformer manufacturing process. Also, they added new sensors running on Edge AI algorithms. The sensors generate real-time data on how each part of the electric transformers is assembled and stored, track how long each stage takes to complete, and provide insight into any changes that might affect the quality of the components being produced.

Use Case 2: Transportation

McLaren Racing is a division of the McLaren Group which works with Grand Prix races and World Championships. Every Formula 1 race car is equipped with 200+ sensors which produce 100 gigabytes of data and stream more than 100,000 data points per second on a race weekend.

In order to win a race and provide security for team members, McLaren’s engineers and crew staff need instant real-time access to the generated data to decide when to change a tire, evaluate the safety of the track, etc. There is no chance to wait while the data is transferred to the cloud and back.

That's why McLaren has put in place Edge Computing and the Edge-Core-Cloud strategy: while crew members receive actionable data in real time during a race, the same data is sent to the central McLaren Technology Center where it is deeply analyzed and fed into a ML-empowered racing simulator to improve and refine AI algorithms.

Use Case 3: Retail

Edge AI employed in video cameras may be used to track customer footfall and analyze buying patterns. For example, NVIDIA’s EGX AI platform built for physical stores and warehouses empowers video analytics applications, which digitize shopping locations, analyze anonymous shopper behavior inside stores, and optimize store layouts.

In another use case, video analytics can help identify pickpockets and track criminals using facial recognition software. With pattern and facial recognition done at the edge, not in a centralised cloud, retailers get immediate alarm notifications and reduced latency and costs.

4 Steps for Getting Started with Edge AI

- Evaluate your Edge Computing readiness. You can’t leapfrog to Edge AI without a properly implemented edge solution in place. Once you have a stable solution architecture that leverages Edge Computing in conjunction with a cloud backend, you can proceed to integrating AI

- Do a thorough examination of your data. As an AI model is trained on data sets, make sure that the data used for it is complete and unbiased while your data management practices are comprehensive and efficient. Otherwise, you will get incorrect and nonsensical answers. However, if you don’t have enough high-quality data to reap the benefits of AI, you can try substituting data with business logic for data-based learning

- Design for enterprise scale. The architecture involving Edge AI techniques must support model deployment and updates over time, new device and chip releases, operating system modifications, and migration to other cloud providers. The designed architecture must be device and cloud-agnostic and feature extreme flexibility

- Provide edge-level data storage and computational power. While developers working with cloud architectures rely heavily on GPUs in centralized locations, those deploying edge compute roll-out strategies need to find a viable alternative to the heavy duty computational capabilities of the centralized cloud. For example, Google has developed its own TPUs, designed to run AI at the edge

Major Trends in Edge AI

Though specialists don’t treat Edge Intelligence as a full-fledged substitute for cloud computing, it is already emerging as an effective tool aimed at promoting and improving AI technologies in multiple domains. The key issue with AI is latency between a particular machine and the system as a whole. Edge computing can eliminate this issue, correct an anomaly once it is detected, and take immediate actions.

- Interest in Edge AI will only grow. According to Tractica LLC, the global number of AI edge devices is expected to jump from 161.4 million units in 2018 to 2.6 billion units by 2025

- The top AI-enabled edge devices include mobile phones, smart speakers, PCs/tablets, head-mounted displays, automotive systems, drones, robots, and security cameras

- According to P.K. Tseng, an analyst from TrendForce, “Big data, precision analytics, and higher-performance hardware have been the three big driving forces pushing AI from the cloud down to end devices and encouraging the combination of edge computing and AI”

- Hardware and AI chips are rapidly evolving and getting more suitable for edge computing. Thus, experts underline a trend towards self-contained chips which handle specific tasks, like imaging or visualization, and remain relatively lower power compared to energy-intensive GPUs. However, as noted by specialists, memory is still the limit to the size of the AI and Machine Learning model: a more advanced model requires more processing power and more memory

- The main challenge so far concerns an ability of AI algorithms to advance and increase precision

More articles on the topic