Check out our latest blog article: From component to enterprise – modular robotics done right.

How GStreamer Helps Apply WebRTC in IoT and Embedded Systems

The Internet of Things is burgeoning—and so is the amount of camera-based connected devices that produce real-time media data and can potentially send it to a PC or smartphone. But there’s a catch.

Most users don’t need a standalone app to play audio or video clips: they have browsers for that. And here’s where WebRTC comes into play by integrating an IP camera with a browser. This relatively new technology might not ensure maximum device coverage at the moment but easily beats its counterparts (such as HLS and MPEG Dash) in terms of performance.

This article will give a brief overview of WebRTC, describe its advantages, highlight its use cases in IoT, and outline what a WebRTC embedded device is. We’ll also explain how to integrate this technology with connected devices through the GStreamer WebRTC framework.

Introduction to WebRTC

Web Real-time Communication (WebRTC) is an open-source technology, developed by Google and Ericsson. It allows developers to integrate real-time video and audio communication capabilities into web and mobile applications.

Simply put, WebRTC establishes peer-to-peer communication between web or mobile browsers without any additional plugins. It gains access to a gadget’s camera and microphone, and is capable of streaming media files with only a half-second lag. In fact, it’s regarded as the primary real-time media file transfer solution.

The rise of remote work has triggered a new stage of developing real-time applications with WebRTC. The technology’s use cases include:

- Audio/video conferencing applications (e.g. Zoom, Hangouts)

- Team collaboration tools and messengers (e.g. Facebook Messenger)

- Video streaming services (e.g. Netflix)

Our Linphone-based VoIP mobile app is a vivid example of how WebRTC solutions allowed our clients to make their corporate communication more convenient and cost-effective.

WebRTC Working Principles

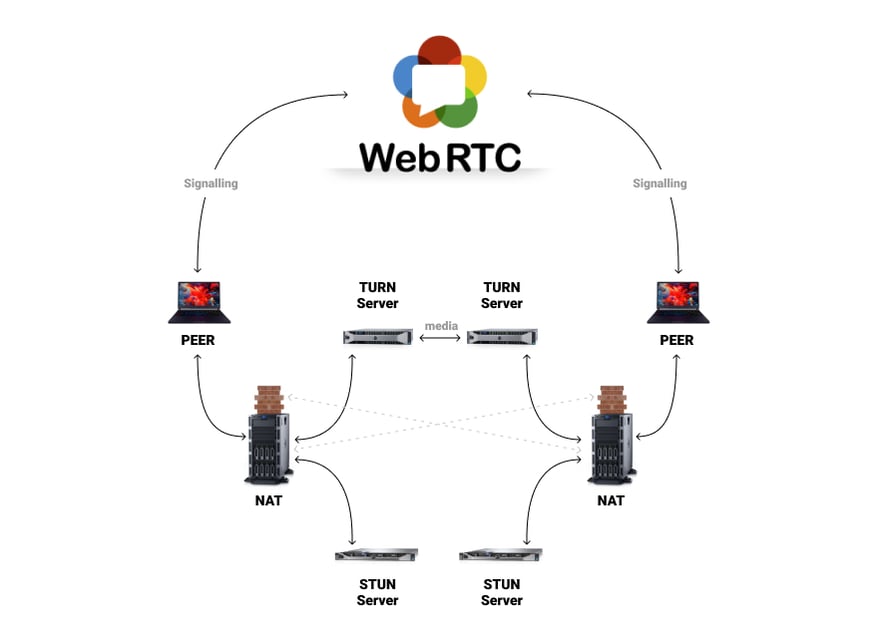

Since WebRTC uses web and mobile browsers to establish a peer-to-peer network, it cannot use the same communication protocols that browsers use for accessing websites. The challenge here is that the users’ laptops or smartphones are protected by network access translation (NAT) devices or firewalls. Unlike HTTPS websites, whose location is known to the entire Internet, laptops and smartphones do not have static web addresses. Thus, in order to begin a communication session between two users, browsers should locate each other and obtain permission to exchange media data in real time.

To set up a phone or video call with a user outside a home network, WebRTC utilizes Session Traversal Utilities for NAT (STUN) and Traversal Using Relays around NAT (TURN) servers, along with signaling/communication protocols. The latter include:

- Session Initiation Protocol (SIP), which starts, maintains, and terminates real-time sessions

- Session Description Protocol (SDP), which is responsible for media file transfer

- Interactive Connectivity Establishment (ICE) protocol, which generates and finds the shortest way to send media between devices

WebRTC Advantages

WebRTC’s flexibility allows any company to improve their business communication tools using fast and secure web applications.

- The technology is not restricted to any particular network, and it is supported by the top four browsers: Safari, Chrome, Firefox, and Microsoft Edge

- WebRTC operates as a client-side real-time media engine, which is customizable and extendable: it allows transferring other types of data besides media content

- WebRTC is built around security: the technology encrypts the data exchanged between devices using the Datagram Transport Layer Security (DTLS) and Secure Real-Time Protocol (SRTP), and notifies users before accessing a computer’s camera and microphone

- WebRTC adapts traffic according to a user’s broadband speed

- The technology is web-related, which makes it easier to write the client side of an app

- The real-time communication engine allows developers to “overlay” other types of data on top of media content

Before WebRTC, real-time media communications relied on C/C++. As a result, the development of custom conferencing and collaboration tools meant longer project timeframes and higher costs. Although WebRTC still uses the technology stack under the hood, developers no longer have to dig into those layers, as it has a JavaScript API on top, which is enough for building applications that interact with browsers.

WebRTC Usage Expanding

Thanks to restful APIs, developers can create WebRTC embedded devices and applications with relative ease. This allows for using sensor data produced by connected devices to trigger alert notifications, audio calls, and video sessions. WebRTC introduces a new security layer to IoT solutions, and can be used as a safe data transfer channel. Finally, there’s much more to the technology than audio and video streaming: a WebRTC-based Smart Home security system, for instance, can both produce a live video feed and tell which door is open using the data from a connected lock.

How to Merge WebRTC into IoT Solutions

The Internet of Things concept revolves around real-time data exchange — and that’s what WebRTC is particularly good at.

For instance, the technology could be a welcome addition to an RFID-based retail store security system. In addition to label tags and security antennae, a WebRTC-based solution can incorporate an IP camera, which monitors store traffic and streams video data in real time to a web application running on a security manager’s computer. When an item that hasn't been paid for sets the detectors off, the manager can correlate the alert signal with camera footage, and identify the shoplifter.

Although you can compile the WebRTC native code package to create a peer-to-peer connection without an intermediary media server, WebRTC is not directly supported by most IoT devices and embedded systems.

Further on the subject of smart cameras, you usually can't access the data produced by smart security systems outside a proprietary network, which prevents users from monitoring industrial facilities and connected homes remotely.

As a result, no business or consumer-facing IoT solution currently provides WebRTC out-of-the-box.

Adapting WebRTC to Embedded Systems with GStreamer

The official WebRTC Native APIs lack flexibility and can be difficult to work with. A better solution may be GStreamer—an open-source pipeline-based framework for creating multimedia streaming applications for desktops, connected devices, and servers. It has added a native WebRTC API to its feature set.

For better understanding, the GStreamer framework can be compared to a plumbing system with water instead of media data and GStreamer pipelines serving as the actual pipes. These pipes are capable of changing the quality and quantity of the water on the way from the public water supply (device one) to a home plumbing system (device two).

Suppose the source device can read video files. We can build a pipe bend (GStreamer demuxer) to separate outgoing traffic into audio and video data streams. The data is decoded via h264 (video) and Opus (audio) down the pipeline and delivered to the target device—namely, its video and audio output components, or to the cloud, where it can be analyzed using machine learning algorithms.

In GStreamer, those functional pipe bends are called elements. They can be divided into source elements, which produce data, and sink elements, which accept it. The elements, in their turn, have pads—the interfaces with the outside world, which allow developers to connect elements based on their capabilities. Additionally, GStreamer features built-in synchronization mechanisms which ensure audio and video samples are played in the correct sequence and at the right time.

Using Embedded WebRTC in Various Business Sectors

With WebRTC and GStreamer, we can build smarter home automation and enterprise security systems and more. Below you will find a list of embedded software development projects which can potentially use GStreamer:

- Intelligent surveillance: Although traditional enterprise and public closed-circuit television (CCTV) solutions have been around for years, they do not make autonomous decisions and require round-the-clock supervision. With WebRTC, IP camera manufacturers can apply video and audio effects to video streams, provide access to camera footage from outside the firewalled network, and implement custom plugins for intrusion or accident detection, thus shifting camera intelligence closer to the cutting edge.

- Shared Augmented Reality: A field worker can receive real-time instructions via a smart headset right from an onsite remote expert, who places digital marks, schemes, and text within a shared Augmented Reality space. A similar approach can be adapted by eLearning companies, beauty industry experts, and retail brands looking to personalize customer experience.

- Smart transportation: In the automotive sector, WebRTC can help engineers create a superior navigation and driving experience through a combination of in-vehicle cameras, sensors, radars, and a high-frequency connection with an on-board computer (or that of a fleet dispatcher).

As more embedded software engineers discover GStreamer, we will soon see more WebRTC embedded products, including industrial solutions for remote equipment maintenance, Smart Home products, telemedicine applications, intelligent motor cars collecting real-time telemetry data, and wearables.

About the Author

Dmitry Zhadinets is an embedded software development specialist at Softeq. With a PhD degree in Speech Analysis and Synthesis and over ten years of experience in embedded system development, Dmitry is involved in multiple enterprise-grade CCTV projects, and is particularly interested in media file transfer technologies. He has been in the IT industry since 2002.

More articles on the topic